GSoC: The Container

June 26, 2016

First of all, I finally passed my exams! I’ve got a few things to do, but in July I’d be completely free to work on my project. That’s why I wasn’t too active this week and wouldn’t be on the next one.

So, this week I was working on non-cloud-related thingie: a new GUI widget, which would contain other widgets of unlimited total height — the Container. Well, it’s actually a ScrollContainerWidget, and the main thing it has is a scrollbar. As I said in the previous post, one of my dialogs — the storage wizard — is too lengthy to be displayed in that area where ScummVM shows its dialogs. We decided that I should implement the Container, so we can put dialog contents in it, and in these cases when there is no room to display all of them, the scrollbar would automatically appear and no overlap will happen.

The first thing I changed was label — it already has a clipping rectangle, so I only had to pass the right values. Then the fun (to be read as difficult) part started. Widgets doesn’t have any clipping rectangle, which means you can’t just make the button draw only part of it. And, well, each widget has its own method, so it means I’ve got to change all of these in order to make them support clipping.

I started with buttons and PopUpWidget, because those are used in the Cloud tab of the Options dialog. Their drawWidget() method calls the corresponding drawButton()/drawPopUpWidget() method of ThemeEngine. ThemeEngine uses queueDD() and queueDDText(). Some DrawData objects are created in there, then their draw steps are called. If I recall correctly, draw steps are methods of VectorRenderer, which has VectorRendererSpec backends with implementations of these. So, I’ve got to pass that clipping area information from Widget though ThemeEngine and DrawData objects right to VectorRendererSpec and use it there.

Each of those draw step implementations has some additional functions in VectorRendererSpec. And these functions have some complicated logic and lots of macros usage. And none of these happen to think that clipping would ever be needed.

So, buttons are using drawRoundedSquare() draw step method. To minimize the possibitility of breaking something, I decided to leave these methods and add new ones — drawRoundedSquareClip() in this case. It checks that clipping is actually needed (i.e. button area is not completely in the clipping area), and if not, uses the original one. If clipping is needed, then other Clip-versions are used. So, drawRoundedSquareAlgClip(), drawBorderRoundedSquareAlgClip(), drawInteriorRoundedSquareAlgClip(), gradientFillClip(), blendFillClip(), colorFillClip() and a lot of macros with names like BE_DRAWCIRCLE_XCOLOR_BOTTOM_CLIP() arrived.

All this — for rounded squares. And there are also triangles, circles and other draw steps, which I didn’t touch yet. But still, Container already works with buttons, PopUpWidget and labels, and I guess in some cases it would be enough to add new draw<WidgetName>() and use all these methods, which already do clipping, so it should be getting easier as I go further. The plan is to make all other widgets support clipping, so Container could be used with any of them. Apart from that — it seems to work fine.

GSoC: The Webserver

June 19, 2016

This week I have upgraded the StorageWizardDialog. Instead of one big text field for code, we decided to put eight small text fields. The scummvm.org page shows the code you should enter, and each code group correctness is checked separately.

ScummVM also automatically checks that the whole code is correct, too. When you enter a valid code, «Connect» button becomes active and you can get your cloud storage working.

However, we don’t think that 40 symbols long code is an easy thing to enter. We’re working on different ways to simplify that task, and one of them — probably the ultimate one — is not to enter that code at all! To achieve that, we’re adding a local webserver into ScummVM. For now, it looks like this:

When you open the StorageWizardDialog in the ScummVM built with SDL_Net (i.e. with local webserver support), there are no fields. Only a short URL for you to visit.

When you do, you’re redirected to service page, where you can allow ScummVM to use that service’s API. When you press the «Allow» button, you’re automatically redirected to ScummVM’s local webserver’s page — and ScummVM automatically gets the code!

I’ve also done a few updates of cloud sync procedure, so now it automatically started when you connect to a storage, or when you save the game or when the autosave happen. The last time I said that if you decide to cancel the sync, the currently downloading file would be left damaged. Well, not anymore — such files are automatically removed.

Finally, we came up with the plan! :D

First, some of my GUI dialogs require more space than ScummVM can provide. So, we decided that I should implement a «container box» widget — an area with scrollbars, which would be providing more virtual space than we really have.

After I do that, I’d start working on cloud files management: users would be able to upload and download their game data right through ScummVM interface.

Then we’re going to add a «Wi-Fi sharing» feature. It basically means that local webserver would be also used to access files on the device through a browser. No more USB cables! You can copy files from your phone by clicking a link in your browser or upload files onto your phone with that.

Then comes the next cloud storage support — Box. I know nothing about it yet, but hopefully it should be similar to those three I already added.

And in the end, I’d work on those things that would make it easier to enter the code if your ScummVM doesn’t have the local webserver feature. Those are browser opening and clipboard support. So, ScummVM would be able to automatically open a browser for you to allow it to use storage’s API and you’d be able to copy the code and paste it in the ScummVM’s text fields. These are interesting features, but as far as I know, those are highly not portable. For example, there is ShellExecute on Windows and special Intent on Android, but no such thing in Unix. And not all platforms even have the clipboard. From the other hand, there is SDL_GetClipboardText()... in SDL2.

Anyway, I’ve got one (and probably the most difficult) exam to pass the next week. Yeah, an exam and the midterm at the same week. I hope I’ll be able to do at least that «container box» :D

GSoC: The GUI Week

June 12, 2016

So, this week I worked on the GUI mostly. That Cloud Options tab is almost fully functional — we want to modify Connection Wizard a little bit, so it helps users enter the code (which is quite long, about 40 characters). Apart from that everything in the tab works as it should, so you can easily switch between your connected storages, see how much place your saves occupied on your cloud drive and when the last successful saves sync was. The Connection Wizard works too, yet, as I mentioned, it won’t notify you of typos. We’re working on corresponding scummvm.org URLs, and these work quite fine.

I’ve also added Google Drive support this week. As Google Drive uses file ids instead of paths, that wasn’t a very pleasant adventure. I mean, imagine you want to download a file knowing a path to it. However, you can’t just ask to find that file because there are no paths in Google Drive. So, you have to divide the path by separators, start from the Google Drive root folder and implement path traversal by listing the directory, finding a corresponding subdirectory and repeating these steps. And, as there are no paths in Google Drive, there could be files with the same name! So, there could be two ScummVM folders or three Saves folders or five soltys.000 files. Well, I just use the first one’s id, so if you’d try to mess up with the Google Drive’s ScummVM folder — you’d succeed. Also, as we wanted to give more freedom to the users, we had to ask for the whole Google Drive access, because application data folders are completely hidden from users. ScummVM only creates its own folder in the Google Drive root directory, so fear not!

Finally, I worked on the progress dialog for the save/load manager. During the saves sync some slots could be unavailable because they are being downloaded. You’d see the following dialog in this case:

Most engines use the common pattern for their save files, so I called these engines «simple». If it’s «simple», you can press that «Run in background» button and you’d see all the slots. Some of them would be «locked» and would lack the thumbnail, but all the others would be easily available to save or load. Unfortunately, there are also «complex» engines. I was unable to implement that «locked» slots feature for these because of their complex nature, so «Run in background» would be unavailable. You would have to wait until all saves are downloaded, and only then save/load feature would be available. You can always hit the «Cancel» button, but that could leave the currently downloading file damaged, so use it on your own risk =)

I’ll have to pass my exams the next week, so I already started working less. The next week plan is to upgrade that Connection Wizard dialog and start working on the local webserver feature. (Yeah, I don’t want you to enter that code when ScummVM could do it for you instead.)

GSoC: Week 2 — The Cloud Icon

June 5, 2016

Not sure what was the original week plan, but I managed to finish Dropbox and OneDrive storage implementations and add the saves sync feature. And that was a midterm milestone in my proposal plan (mostly because I tried to schedule all the difficult work after midterm as I’m going to have my exams very soon — right before the midterm).

So, now ScummVM knows how to upload and download saves from Dropbox and OneDrive and automatically does that on launch. When I did that, I decided to work on some GUI-related tasks: add an indication icon that is shown when active storage is syncing something, for starters. Well, that took the whole week :D But now it’s an extra nice icon in the corner which automatically appears and disappears when needed. And it’s pulsating when active!

Now I’d work on the GUI further: add a sync progress dialog in save/load manager and forbid using save slots which are being synced. In case I wouldn’t know what to do with the GUI, I can always work on Google Drive storage implementation.

UPD: by the way, I've updated the icon, so now it's completely created by me.

GSoC: File downloading week

May 28, 2016

According to my proposal’s schedule, I should’ve been working on Storage interface, token saving and Dropbox and OneDrive stubs this week. I’ve tried to schedule less work before the midterm because I’ve got some exams to pass. Still, I managed to do most of that first week plan with my preparation work. So, I’ve finished that by Tuesday and started working on the things planned for the second week: files downloading.

I’ve also implemented DownloadRequest class that Tuesday. It just reads bytes from NetworkReadStream and writes them into DumpFile.

There was some small problem with DumpFile: it fopen’s a file (creating it, if it doesn’t exist), but it assumes that the file is located in some existing directory. When you’re downloading a file from the cloud into your local folder, you might not have the same directory hierarchy there. Thus, fopen fails and no download occurs. As there was no createDirectory() method in ScummVM, I had to implement one. So, now DumpFile accepts a bool parameter, which indicates whether all directories should be created before fopen’ing the file.

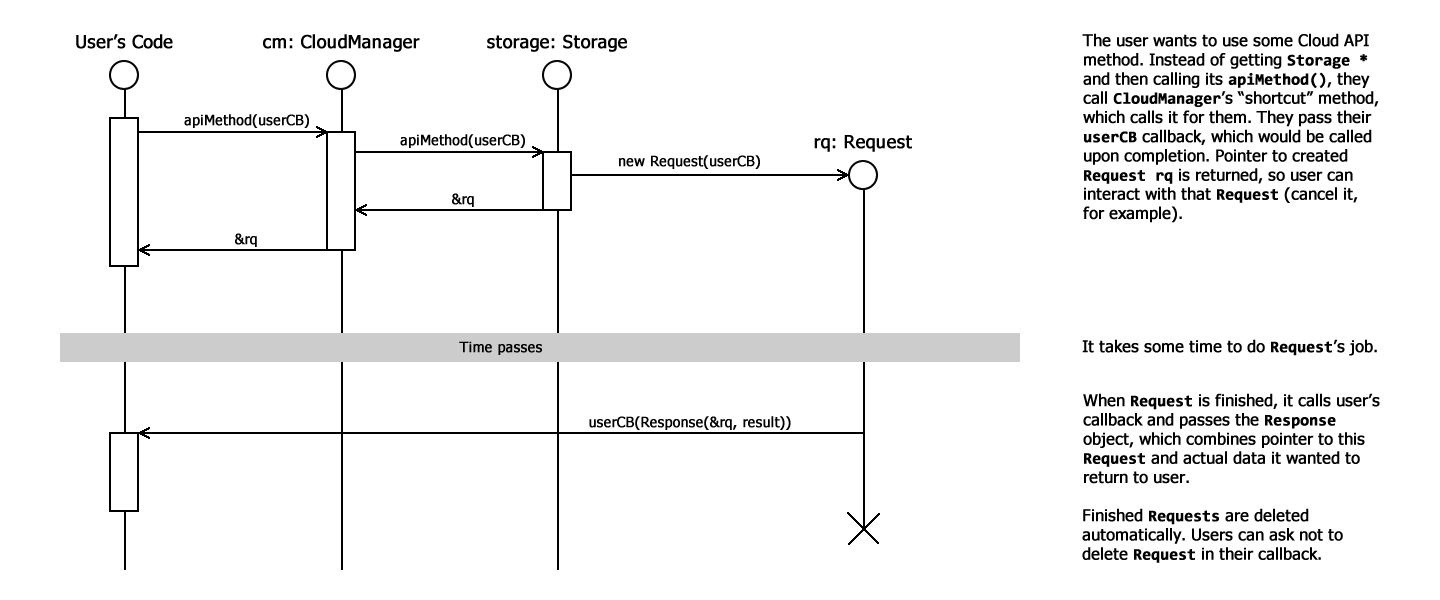

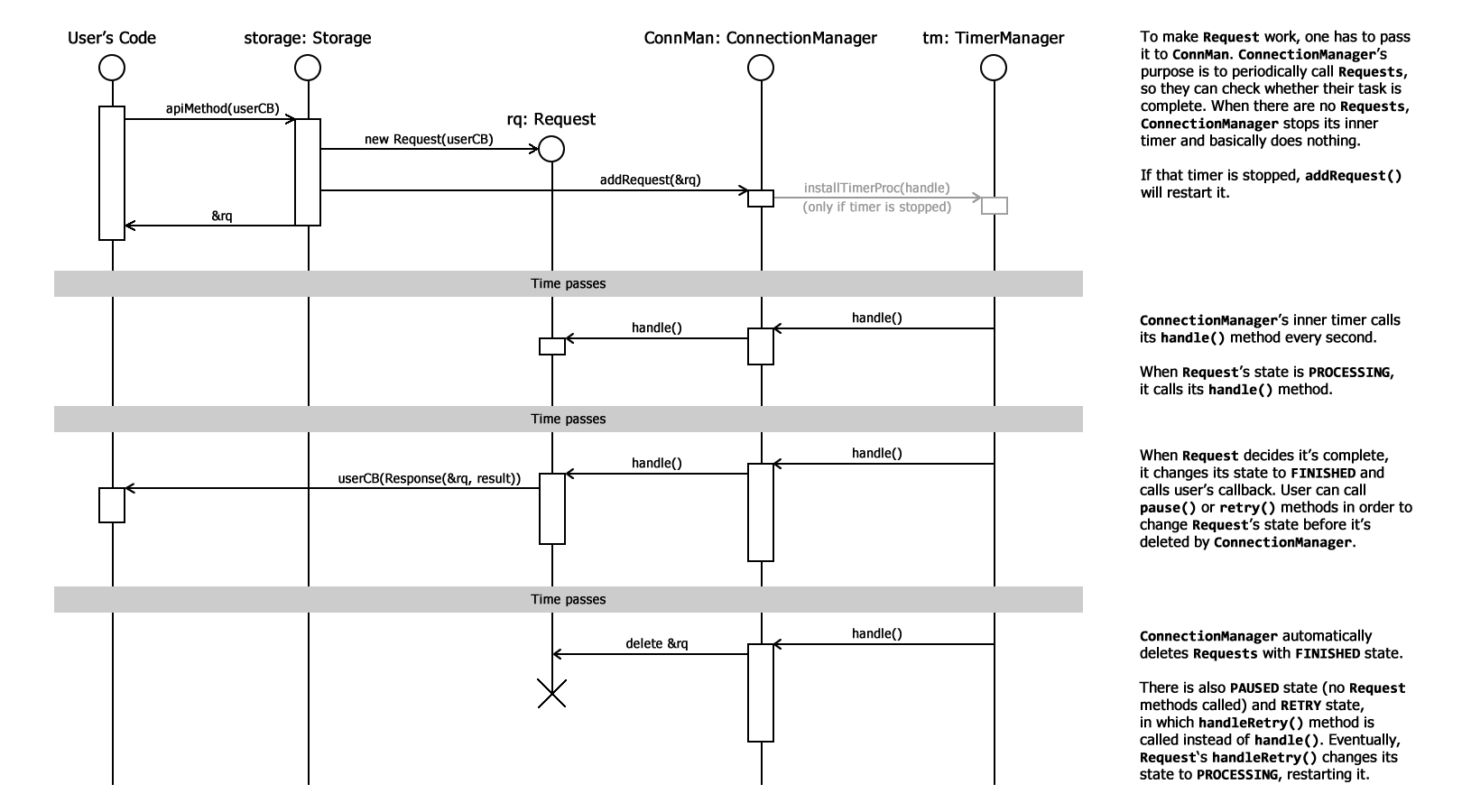

Then we’ve decided I should «upgrade» our Requests/ConnectionManager system by adding RequestInfo struct and giving each Request an id. User could use an id to locate the Request through ConnMan and ask it to change its state. That’s mostly needed when we want to make the same request again: for example, if some error occurred, we probably would like to try again in a few seconds. This system added RETRY state for Requests, so user’s code can easily ask to retry Request. The original idea with RequestInfo and ids was rethought, so now we’re actually using pointers to Request instances. That way one can easily affect Request: cancel, pause or restart it with its methods.

With that system I could easily implement the OneDriveTokenRefresher. That might be not the best class name, but it describes it quite well =) OneDrive access tokens expire after an hour, so that’s very sad to get an error because you’re working with the app more than an hour. This class wraps the usual CurlJsonRequest (which is used to receive JSON representation of server’s response) to hide errors regarding expired token. Basically, it just peeks into received JSON and, if there is an error message, refreshes the token and then tries to do the original request again, using the new token. With that new system, it just pauses the original request and then retries it — no need to save the original request’s parameters somewhere because they are just stored along with the paused request.

After I finished that useful token refreshing class, I’ve implemented OneDriveStorage::download(), thus completing the second week plan too. The plan also has «auto detection» feature, which is being delayed a little because it would be called from the GUI and I’m not working on the GUI yet. Yet, there was no folder downloading in my plan, and it’s obviously a must have feature, so that’s what I did next. The FolderDownloadRequest uses Storage’s listDirectory() and download() methods, so it’s easy to implement and it works with all Storage implementations which have these methods working.

I actually implemented OneDriveStorage’s listDirectory() a little bit later because there is no way to list directories recursively in OneDrive’s API. There is such opportunity in Dropbox, so it takes me one API call to list whole directory and all its subdirectories contents. To list OneDrive directories recursively, I had to write a special OneDriveListDirectoryRequest class, which lists the directory with one API call, then lists each of its subdirectories with other calls, then their subdirectories, and so on until it lists it all.

I’ve been asked to draw some sequence diagrams explaining all these Requests/ConnectionManager systems, so that’s what I’m going to do now. I’m not a fan of sequence diagrams and I’m going to draw them in my own style, which, I believe, makes them simpler.

UPD: here they come, two small diagrams (available on ScummVM wiki too):

File sync comes next week and I guess I’m going to discuss that feature a lot before I actually start implementing it.